Category: Quantum Mechanics

-

Measurement, Decoherence, and Why Quantum Outcomes Appear Stable

Quantum mechanics is often presented as fundamentally mysterious, especially when it comes to measurement. A quantum system can exist in a superposition of possibilities, yet measurements yield definite outcomes. This apparent tension has motivated a wide range of interpretations, from wavefunction collapse to many-worlds to strongly observer-centered views. A more…

-

Hawking radiation: a weight-loss program for black holes – another black hole conundrum and a cute connection between black holes and quantum field theory

Coming on the heels of the apparent success of Wegovy and Ozempic for obese humans, a situation that I am still very skeptical about for its long-term effects, I thought I would write a post about how Hawking radiation causes black holes to lose mass. It is subtle and not…

-

A story of commutators

The conceptual step that took humans from their pre-conceived “classical” notions of the world to the “quantum” notion was the realization that measurements don’t commute. This means, as an example, that if you measure the position of a particle exactly, you cannot simultaneously ascribe to it an infinitely precise momentum.…

-

Schrodinger’s Cat Lives again!

This article concerns a new paper I just submitted and now published . It concerns a peculiar feature of quantum mechanics (and also of classical mechanics). The feature is this. The Laws of Physics appear indifferent to the direction of time. If you play a video of two balls colliding…

-

Error Correcting Codes and the Quantum version

This article was inspired by a very nice article in Quanta magazine (by Natalie Wolchover) about the connection between error-correction codes and space-time. I thought the quantum mechanics concepts were glossed over, so decided to expand on it a little. Electrical engineers and digital-signal-processing engineers study error correction for a…

-

Schrodinger’s Zoo

I have been enjoying reading Richard Muller’s “Now: The Physics of Time” – Muller is an extremely imaginative experimental physicist and his writings on the “arrow of time” are quite a nice compendium of the various proposed solutions. Even though none of those solutions is to my liking, they are…

-

Master Traders and Bayes’ theorem

Imagine you were walking around in Manhattan and you chanced upon an interesting game going on at the side of the road. By the way, when you see these games going on, a safe strategy is to walk on, since they usually reduce to methods of separating a lot of…

-

Special Relativity; Or how I learned to relax and love the Anti-Particle

The Special Theory of Relativity, which is the name for the set of ideas that Einstein proposed in 1905 in a paper titled “On the Electrodynamics of moving bodies”, starts with the premise that the Laws of Physics are the same for all observers that are traveling at uniform speeds…

-

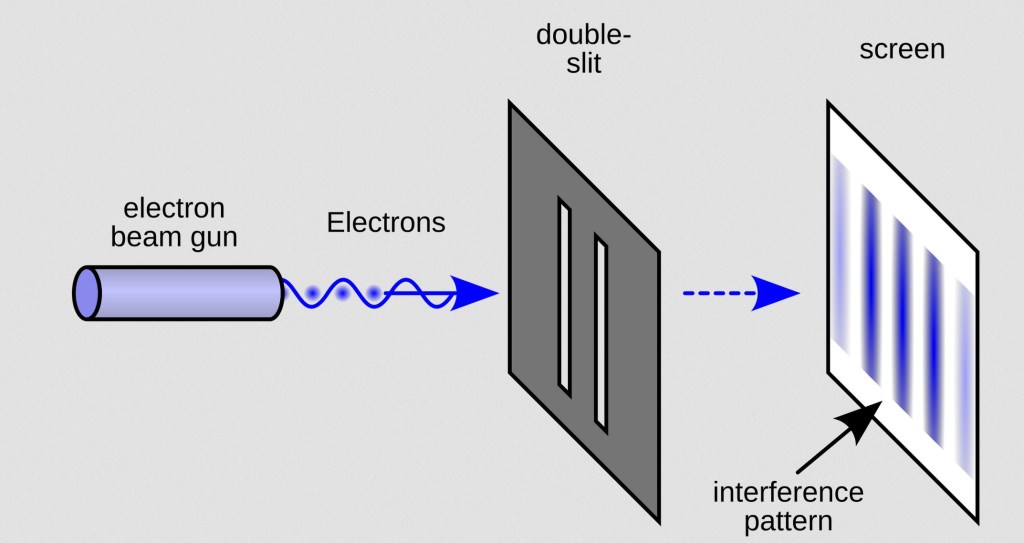

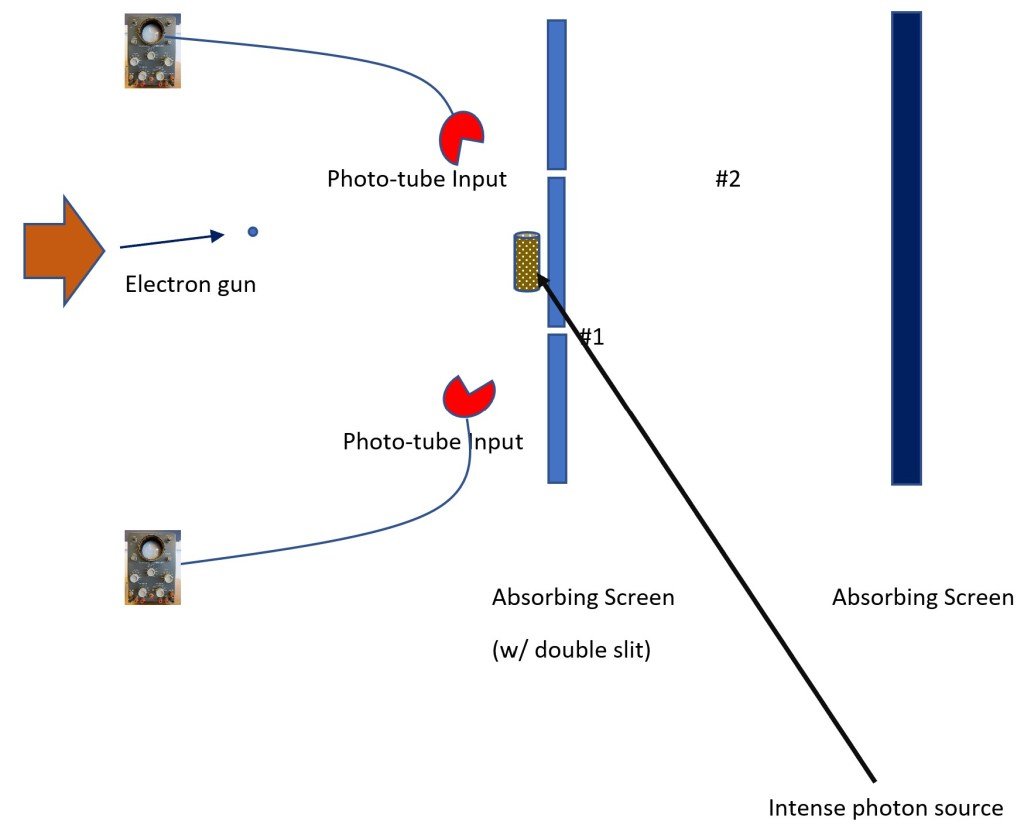

Can a quantum particle come to a fork in the road and take it?

I have always been fascinated by the weirdness of the Universe. One aspect of the weirdness is the quantum nature of things – others relate to the mysteries of Lorentz invariance, Special Relativity, the General Theory of Relativity, the extreme size and age of the Universe, the vast amount of…

-

Here’s an alternative history of how quantum mechanics came about…

Quantum Mechanics was the result of analysis of experiments that explored the emission and absorption spectra of various atoms and molecules. Once the electron and proton were discovered, very soon after the discovery of radioactivity, it was theorized that the atom was an electrically neutral combination of protons and electrons.…